I recently read an excellent blog from Matthew Evans (@head_teach), who wrote about the purpose of monitoring in schools and flipped the traditional narrative of monitoring to ascertain ‘how good is this?’ to a more developmental approach, posing the question ‘what is going on?’ This got me thinking deeply about the decisions we make as leaders around implementation and how we can ensure we are investing our limited time, energy, and resource into the most appropriate course of action, dependant on ‘what’s going on’ here.

I feel that the EEF’s implementation guide is a relatively good starting point when considering this. It offers a useful framework to consider the before, the during and the after of implementation of any kind, which can support leaders in guiding their thinking throughout this complex process. It can be found here- https://d2tic4wvo1iusb.cloudfront.net/eef-guidance-reports/implementation/EEF_Implementation_Guidance_Report_2019.pdf

In this blog I pay particular attention to the ‘explore’ phase in which one identifies a key priority, explores programmes of practice that could be implemented and examines the fit and feasibility within the school’s context. (EEF 2021). Mostly because my lived experience as a senior leader has taught me this exploration phase is far from simple and if we don’t get it right (which happens!) it of course has implications for the rest of the implementation phases and inevitably leads to the need for de-implementation down the line, which further depletes hard-found time and energy. We also need to lay the cards on the table and accept that although this isn’t ideal, it is a reality and when this situation occurs, as with all errors, the best we can do is approach a ‘lessons learnt’ stance to refine our professional toolkit for future implementation.

Ideally, however, we want to get this exploration phase as right as possible so that we can identify a well-informed ‘best bet’ and pour resource into the right place, maximising our chances of having meaningful impact on pupils in classrooms.

Let’s start by thinking about the PURPOSE of the explore phase. Inspired by Frayer’s model, let us begin by looking at what the purpose IS NOT. It is not to:

-place blame or poke holes in practice

-solely pick out problems

-to inform a well-written document that will be sent to various stakeholders to affirm confidence in the leader’s perceived expertise

-to be seen to be ‘exploring’ the state of play for a decision already made by senior leaders

The purpose of the explore phase is to engage in a school-wide, collaborative consideration of an element of practice, with the shared goal of developing an accurate picture of where practice currently sits and what can be done to support improvement in this area, both at a surface-level (addressing the visible by-products) and the root causes (the systems, structures, and approaches underling these.)

I place emphasis on this distinction between the by-products and root causes because quite often, the former overpowers the latter. For example, if reading progress isn’t where it needs to be (the visible by-product), there are multiple potential root causes of this. The ‘explore’ phase allows us to gather evidence of what the root cause is most likely to be so that we can choose a course of action that will in-fact address the issue at hand.

A WORD OF WARNING HERE- hierarchical ‘I’m big you’re small’ Trunchbull-style assessments of ‘what is good and what is bad’ in this explore phase is most likely to further compound the situation. If collective improvement is to be seen, collective understanding needs to be developed. I’m not contesting that subject-specialists or senior leaders should not lead this body of work, but I am very aware that often, this explore phase can become a ‘mini-Ofsted style assessment’ of practice, which damages the foundations of school improvement- a continuous culture of improvement. Damaging this is not the way to go. Engaging in conversation with teachers and support staff on the ground, collating and corroborating perspectives, evidence and sources of information is the best way to build an ACCURATE view of both the priority that needs to be addressed and what is leading to the challenges in this area in the first place.

One without the other is inconsequential. Knowing you have a problem without understanding the root cause isn’t going to resolve the problem and understanding the root cause without knowing what it leads to renders us unable to measure whether our theory of change is having tangible impact when addressed.

In ‘The Perils of Perception’ by Bobby Duffy, a key recommendation put forth for managing our misconceptions is ‘we need to tell the story’. He writes:

‘Although facts are important, they are not sufficient given how our brains work. We need to be aware of how people hear and use the, turning them into stories, that might not always lead to the right conclusions. There is no contradiction between facts and stories; you don’t need to choose only one to make your point. The power of stories over us means we need to engage people with both.’

I love this quote from the book because it highlights the co-existence of both qualitative and quantitative information in crafting an accurate picture of the state of play of something in question.

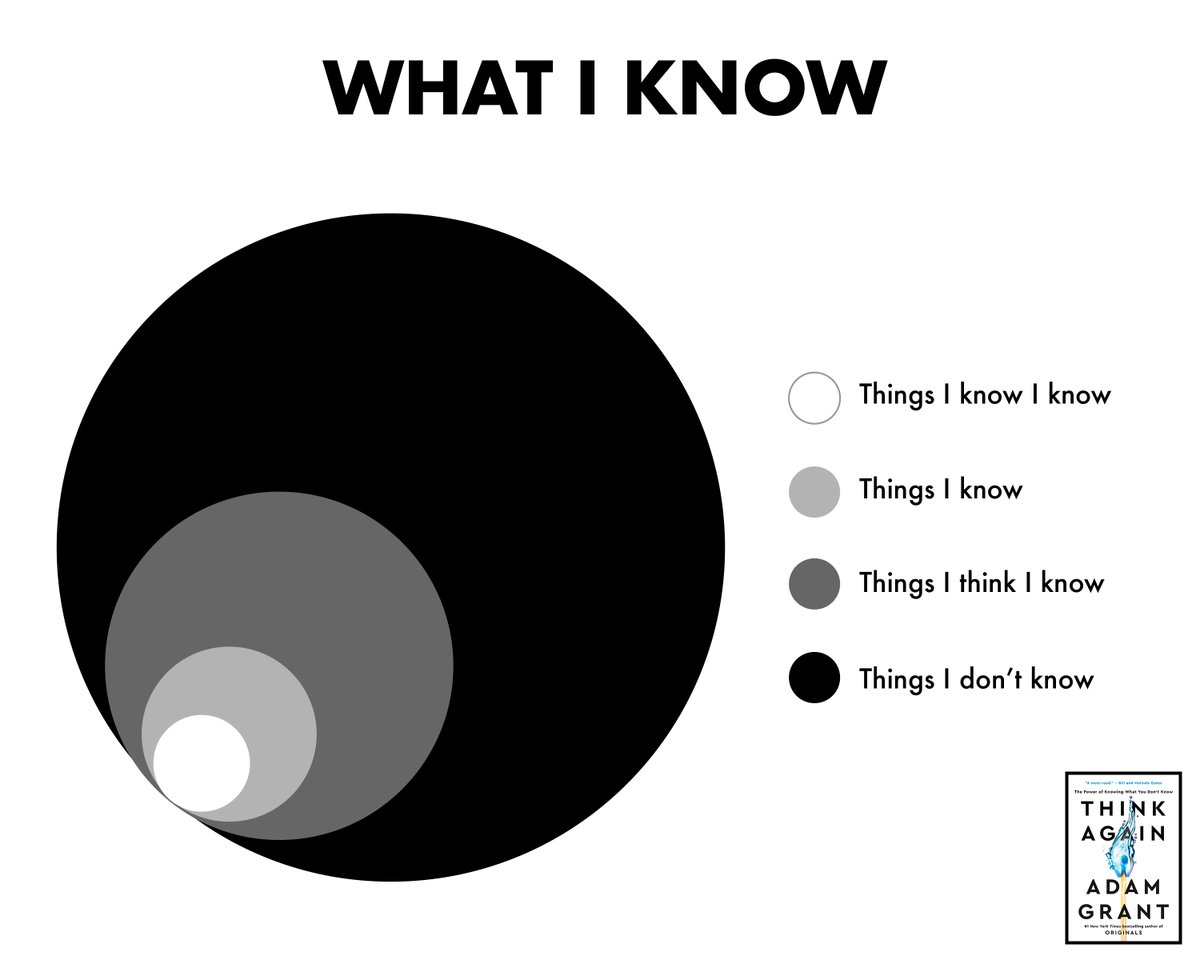

One last thing that I feel crucial to consider when exploring? A recognition that no matter who we are and what level of ‘expertise’ we hold, ‘you don’t know what you don’t know’ (she says sheepishly writing this blog!) Adam Grant writes about this extensively in his book ‘Think Again’. The below visual summarises it nicely. We mitigate against this one in the same way we mitigate against damaging culture within schools- collaboration over competition, development over judgement and an unrelenting sense of purpose…the pupils we serve.